All projects

July 2025

Spellmotion

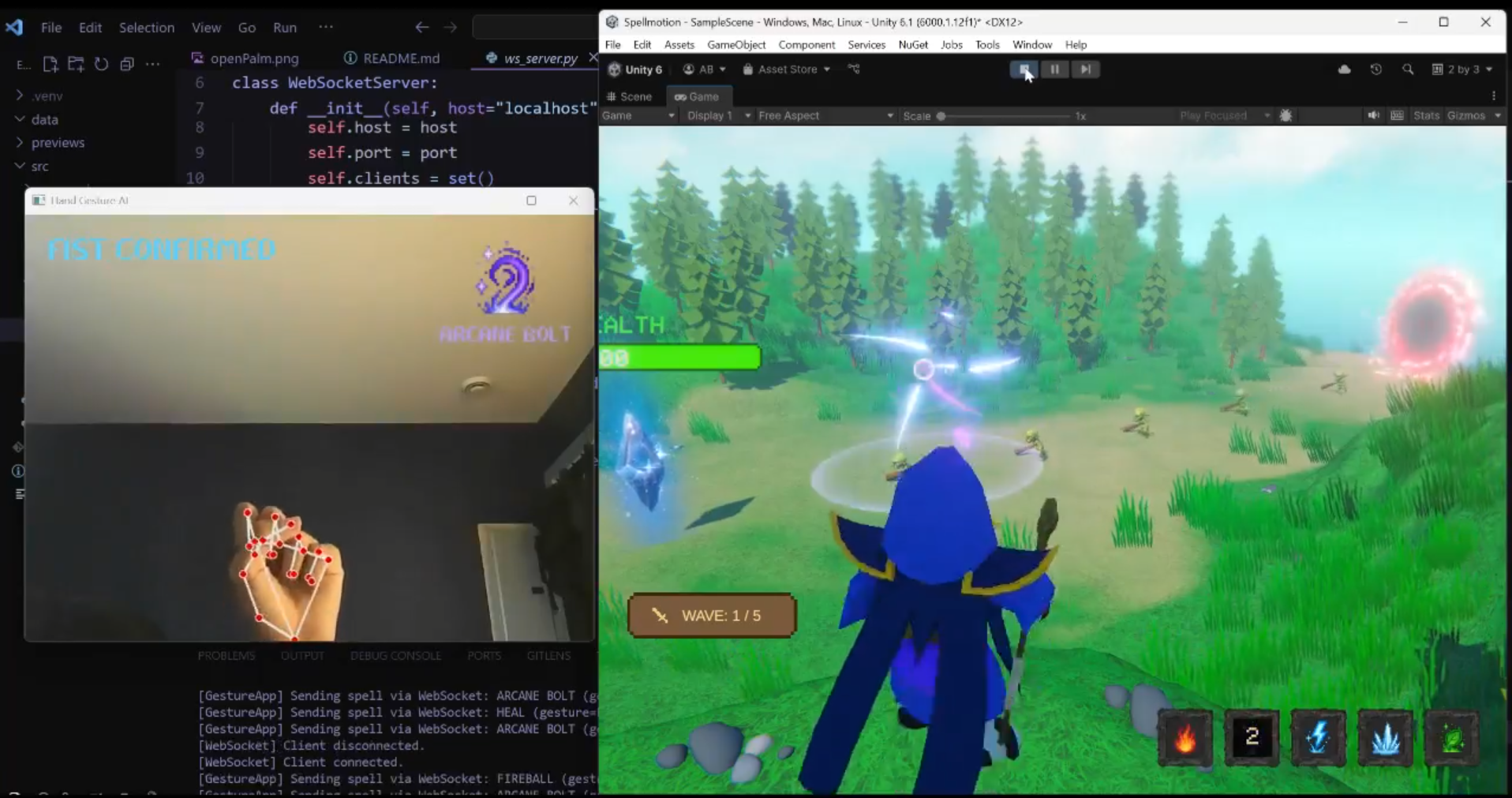

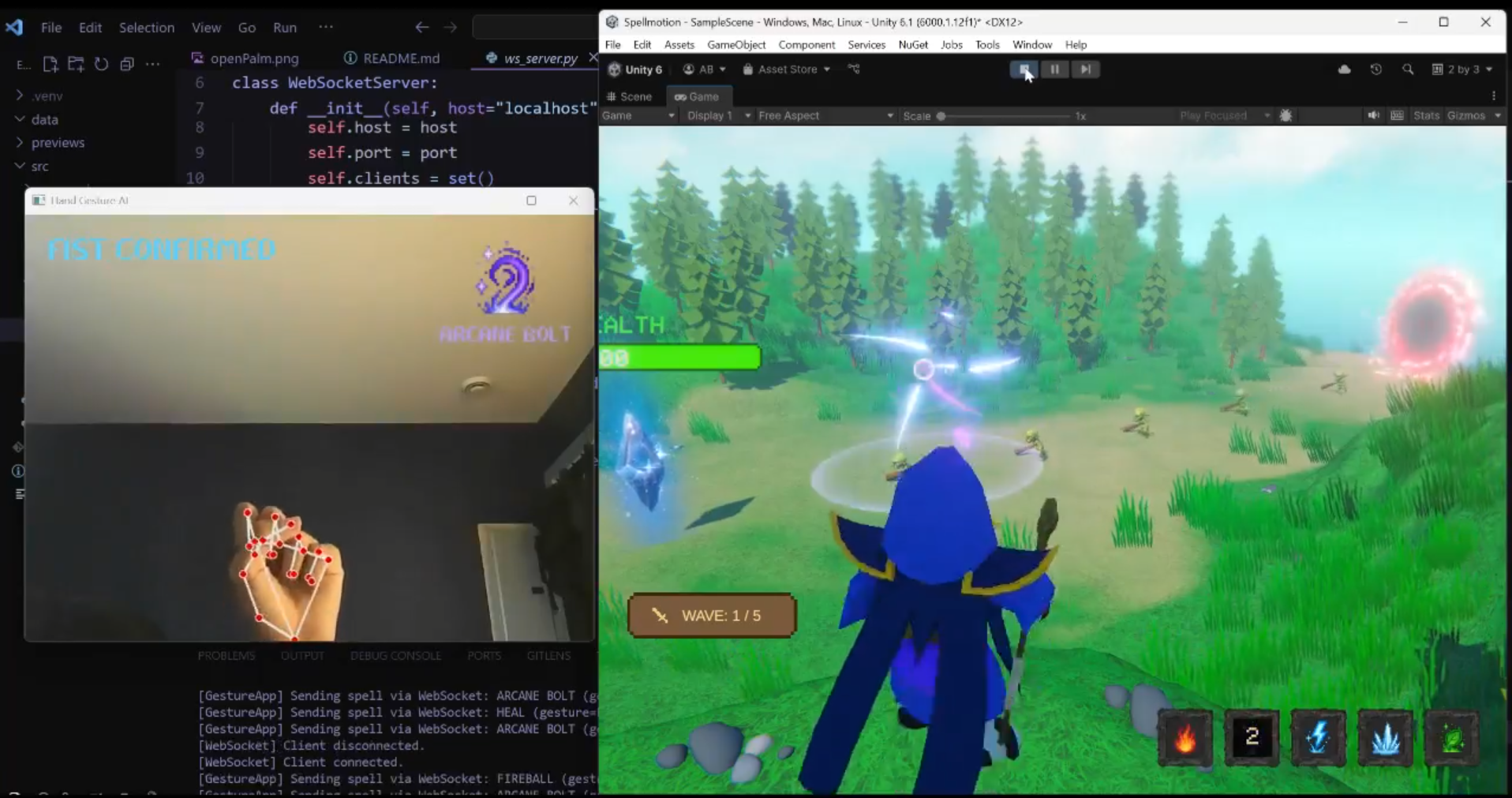

I undertook this solo project with the desire to explore the field of computer vision through a practical application. Passionate about development, I had the idea of merging this technology with video game creation to design an immersive experience where traditional physical controls are replaced by natural interactions. The goal was to transform theoretical image processing concepts into an intuitive and responsive control tool for a virtual environment.

Contribution

As the sole designer, I developed the entire ecosystem starting with a Python application dedicated to artificial intelligence. I used MediaPipe and OpenCV to detect hand landmarks and designed classification algorithms capable of translating specific gestures into spell commands. For the gameplay aspect, I built a complete defense game in Unity, including combat logic, particle systems for magic effects, and enemy wave management. I also ensured synchronization between these two worlds by setting up bidirectional communication via WebSockets, enabling near-instantaneous transmission of JSON data to guarantee smooth gameplay.

Project Gallery

Visual Overview

Approach

My strategy was based on a decoupled architecture in order to maximize the performance of each component. I separated the heavy processing of computer vision, managed by the Python script, from the graphics rendering handled by the Unity engine. This modular approach allowed me to isolate and refine the accuracy of gesture detection independently of the game engine. To tie everything together, I chose the local WebSocket protocol, which offers the minimal latency essential for real-time control, transforming a simple webcam into a sophisticated gaming device.

Features

- Gesture recognition IA

- Communication Real-time WebSocket

- Unity game engine

- Varied spell system

- Visual effects (VFX)

- Enemy wave management

More Projects